AI in a Human World

The greatest risk of AI may not be what it replaces — but what we slowly stop practising ourselves. Loss of creativity. Loss of collaboration. Erosion of expertise. The illusion of the importance of speed against our human effectiveness.

The last few weeks I’ve been doing some work, and in a few conversations, pondering whether there are patterns of work when AI is more effective. A lot of content seems to be about when and how to use AI to produce more outputs faster and jump straight to your tool of choice…. but what about when the optimum choice is NOT ‘AI First ‘and instead is ‘People First’?

In this post I’ve identified a few different patterns to share my thoughts on this challenge and when it is most effective to incorporate AI in a Human World.

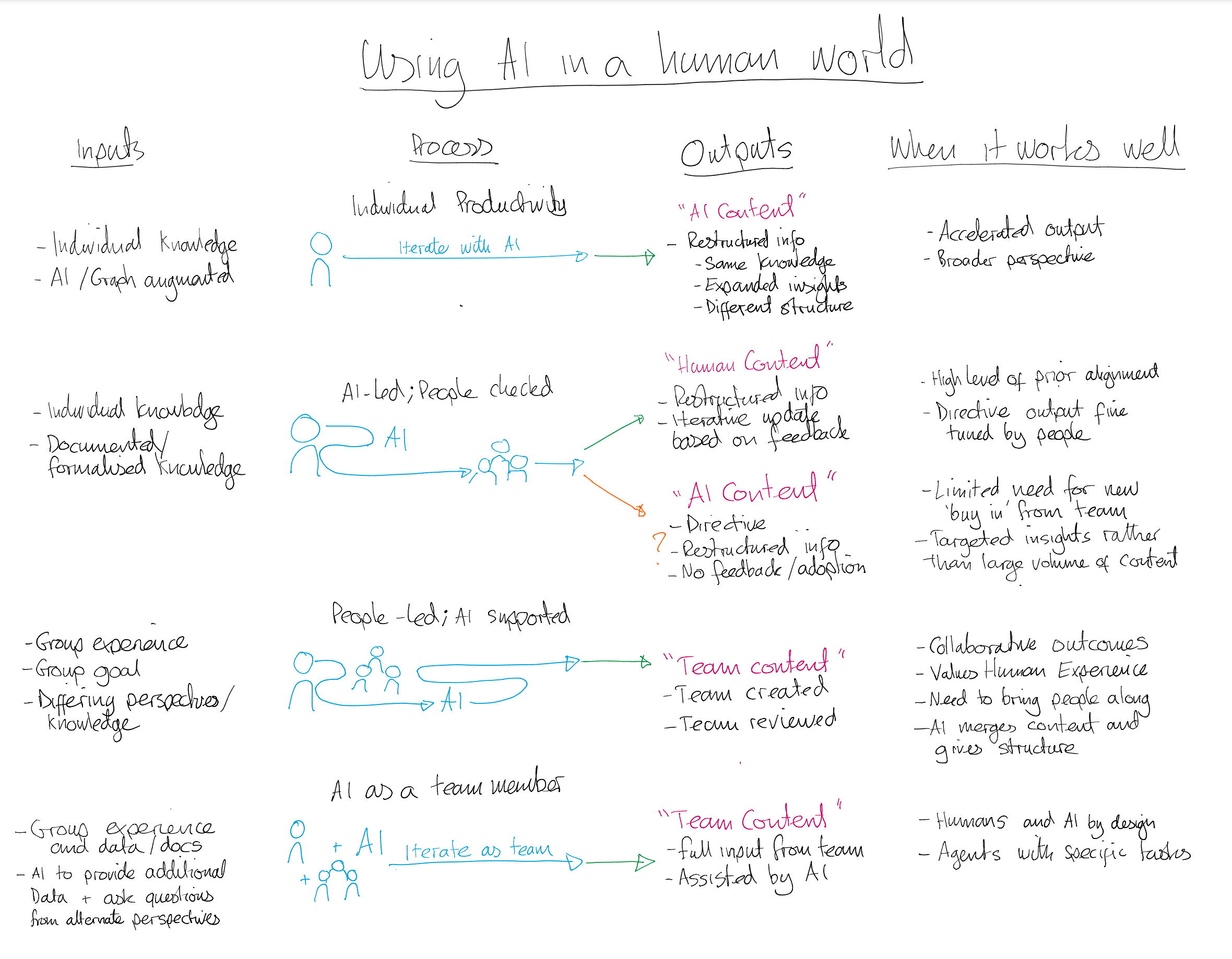

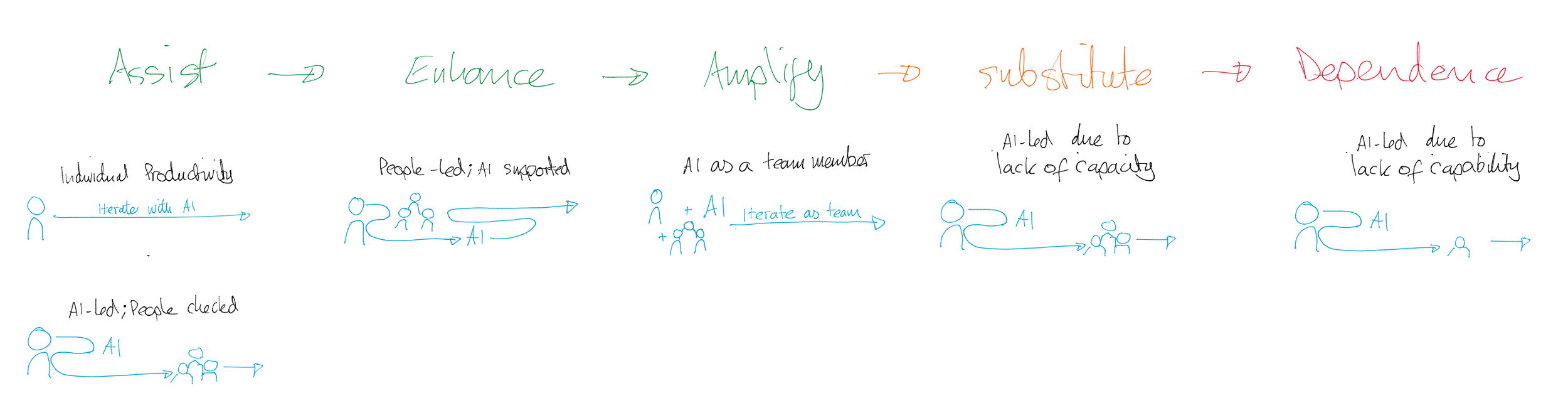

Accessible description (AI Generated) - A four-column handwritten diagram titled “Using AI in a Human World.” The columns are “Inputs,” “Process,” “Outputs,” and “When it works well.”

The diagram presents four models of human and AI collaboration: Individual productivity; AI-led, people-checked; People-led, AI-supported; AI as a team member - These are broken down below

For each of the patterns I’ve tried to describe when AI might be used either to do all or a chunk of the work, to restructure outputs, or to work more like a team member. Conversely, to a lot of content I see, I’ve also thought about when organisations should drive conversations with real human people rather than AI.

To try to identify which pattern might fit what type of work you’re doing I’ve suggested some types of inputs and outputs might be involved, and characteristics that describe when that pattern might work best.

Individual Productivity

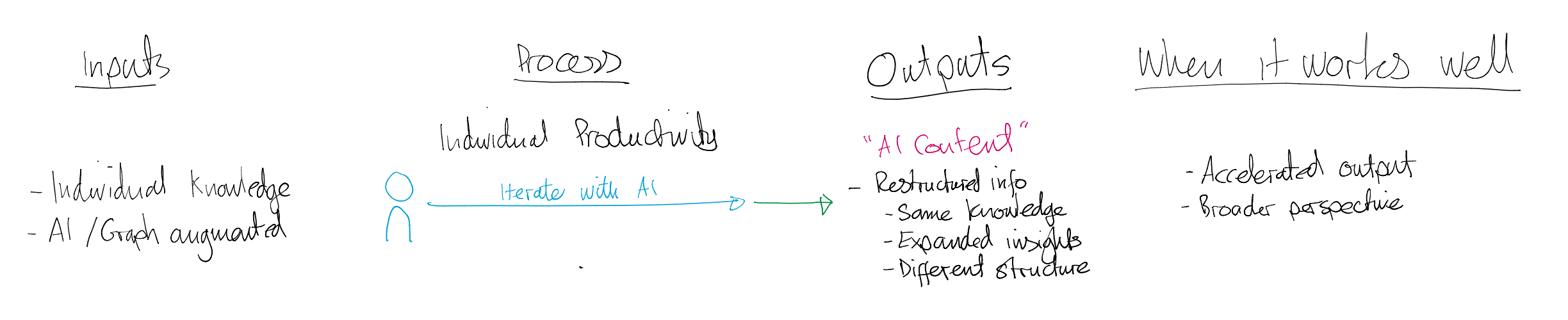

Accessible description (AI Generated) - Individual productivity

Inputs include individual knowledge and AI or graph-augmented information. A person iterates with AI to produce “AI Content,” including restructured information, expanded insights, and different structures. This approach works well for accelerated output and broader perspectives.

I see an entry point of using AI as a thought partner to help understand ideas, content, and product outputs - This is one of the common patterns I use day to day - Taking my ideas, my work, my knowledge, my experience, and using AI to challenge my thinking and add additional insights, and restructure it into an alternate format than I may have thought of - AI structured content, based on my thinking and ideas.

This means we can teach people how to ask questions, get additional perspectives, and accelerate to get to the goal they’re aiming for with a broader, higher quality, perspective.

AI-led; People Checked

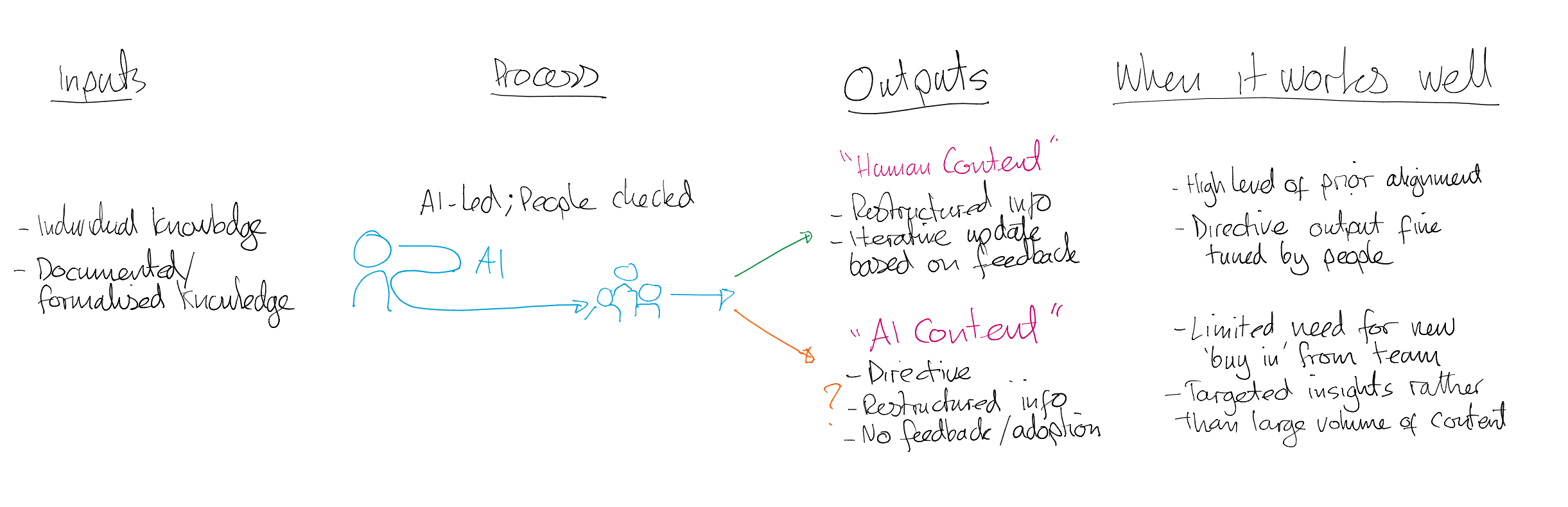

Accessible description (AI Generated) - AI-led, people-checked

Inputs include individual knowledge and documented or formalised knowledge. AI produces outputs reviewed by people. Outputs may be either “Human Content,” refined iteratively with feedback, or “AI Content,” which is directive and lacks feedback or adoption. This works best where there is high prior alignment, limited need for buy-in, and targeted insights rather than large volumes of content.

I’ve seen an example of this recently in a workshop where rather than sharing personal expertise a person used Copilot Cowork as their first step - this produced a lot of content quickly but swamped discussion and didn’t give the credibility that was due to the individual’s experience.

This group work pattern is where I think a bit of thought as to the output might be beneficial - A lot of people seem to jump in - delegating - in the rush to get to an output. I’ve considered a couple of scenarios to explore this pattern:

If the work is well understood by the group, and there is general consensus or alignment on the topic across the group, or if the outcomes are quite directive and the information is being disseminated to the group, this might be a valuable approach.

If there needs to be alignment across the group and bringing together of ideas, and people, then organisations must drive a people centric approach to ensure SMESas they might not be part of the collaboration and brought along on the journey to reach consensus. Content can appear too ‘finished’ and so act as a barrier to thought or challenge.

This thinking means that we’re consciously looking for weaknesses in our approach to ensure that we’re supplementing our humans, not replacing them.

People-led; AI Supported

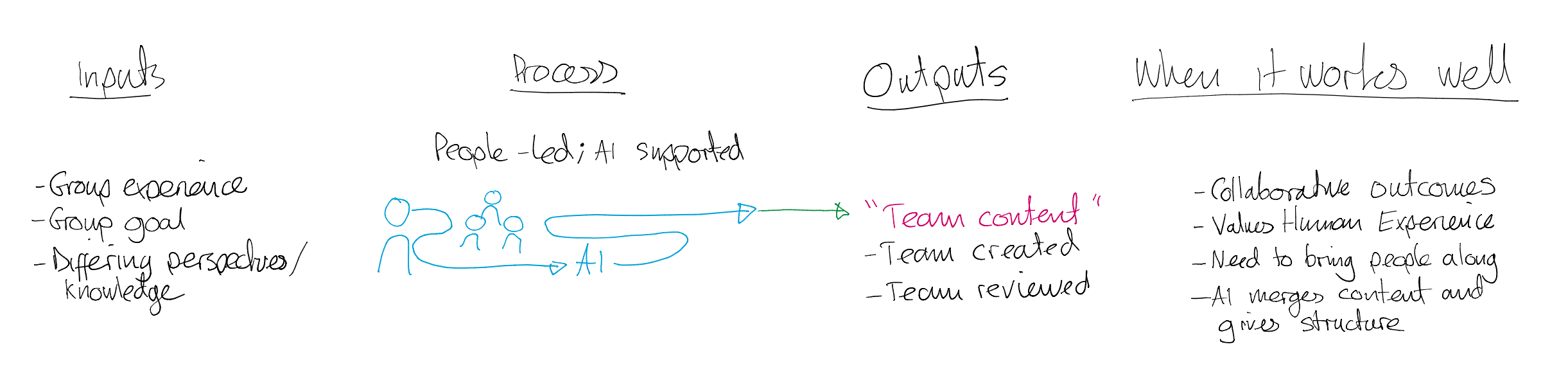

Accessible description (AI Generated) - People-led, AI-supported

Inputs include group experience, group goals, and differing perspectives. Teams collaborate with AI support to create “Team Content” that is team-created and team-reviewed. This model works well for collaborative outcomes, valuing human experience, bringing people along, and using AI to merge and structure ideas.

I’ve recently wanted to build a framework that enabled me to gauge the capability and experience and exposure of individuals in establising AI governance in organisations - After capturing my initial thoughts I asked a group of SMEs for input to help improve and iterate on the idea collaboratively before then using AI to establish the more robust model.

I wanted to ensure I’ve captured their expertise and perspectives before jumping to AI . I wanted to ensure all voices were heard and incorporated into a shared and collective view. Only once we’d shared and evolved our thoughts collectively did I feed them into AI to help evolve the thinking and shape a model that could be used to progress our goal.

The implication is that we show that we value our human experts and that they are the key to our success.

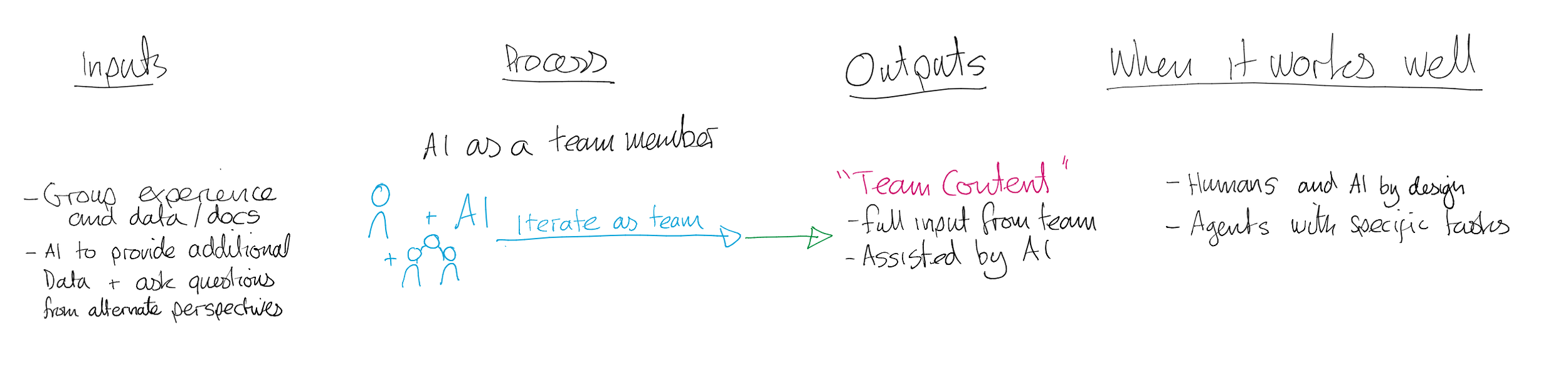

AI as a team member

Accessible description (AI Generated) - AI as a team member

Inputs include group experience, shared data and documents, and AI providing additional data or alternative perspectives. Humans and AI iterate together as a team to produce “Team Content” assisted by AI. This works best when humans and AI are intentionally designed to collaborate, including AI agents with specific tasks.

The last pattern I identified is consciously designing a team comprising of people and AI team members with distinct capabilities where the AI can supplement and augment the human capabilities. These may be agents created to publish to Teams and call from a team chat or meeting. They may be modular agents that perform repeatable tasks that are fed with inputs manually.

I’ve used this approach by creating an Agent to aid with gauging AI and Power Platform governance maturity of an organisation by using links to Microsoft maturity models. This agent was a designated part of our team - a maturity SME agent. Our human team had a meeting with the client to explore their maturity through an open conversation - these transcripts were then delegrated to our Agent team member to assess the transcript, score against the maturity models, producing an exec summary, a SWOT analysis, and top 3 recommended actions.

This is a way of thinking that not only maximises value from both people and AI, but also starts to help build muscle memory as to how agentic systems can be designed and developed using child or connected agents to deconstruct a process and design it with a more ‘frontier firm’ agentic approach.

Conclusion

From these insights and patterns we can see that as AI becomes more capable the differentiator of the best organisations will be how we bring together human capability with AI as an advantage - Humans + AI.

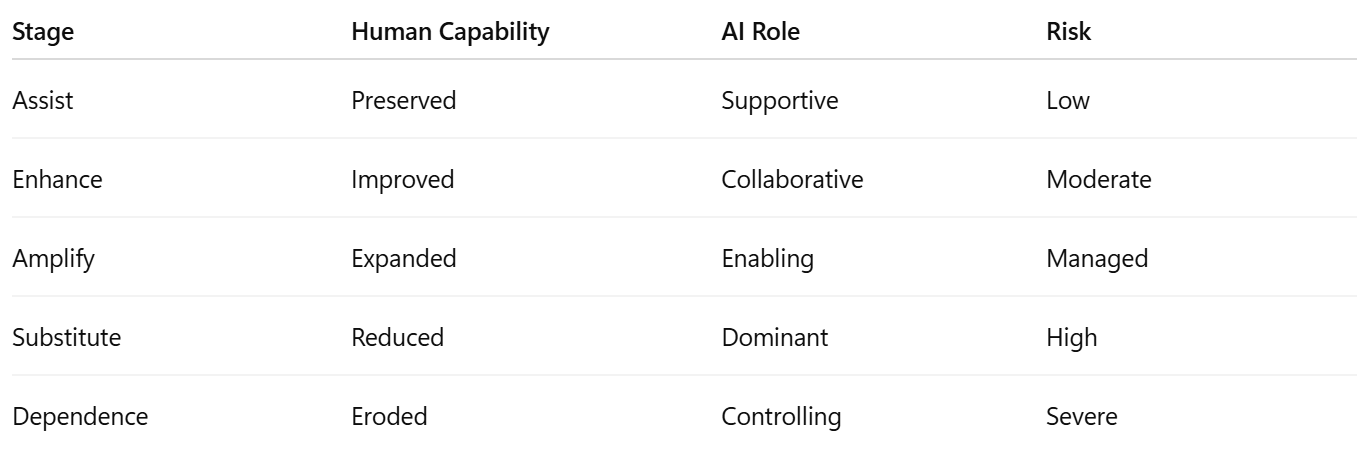

We can translate this into a Human Advantage Framework to give us a way to understand the different ways AI can influence how humans work and make decisions and ensure that we retain the ‘human-ness’ that gives us an advantage.

The spectrum ranges from simple assistance through to unhealthy dependency:

Assist - AI helps reduce friction and speed up routine tasks.

Enhance - AI improves quality, insight, or consistency while humans remain fully engaged.

Amplify - AI extends human capability, enabling people to achieve outcomes previously beyond reach.

Substitute - AI begins replacing human judgement or decision-making in ways that reduce engagement and understanding.

Dependence - Over-reliance on AI leads to skill erosion, weaker critical thinking, and reduced human resilience.

The Human Advantage Framework

“Questions leaders should ask”

Does this use of AI strengthen or weaken human understanding?

Are we augmenting judgement or replacing it?

Would employees still know how to operate without AI support?

Is accountability still clearly human?

Are we optimising for convenience or long-term capability?

The goal is to consciously evaluate how we use AI in a use case to remain intentional about where AI creates genuine augmentation, and where it may unintentionally diminish the very capabilities we rely upon most.

We must retain our human advantage - In a world increasingly shaped by intelligent systems, qualities such as judgement, empathy, creativity, ethics, contextual understanding, and accountability become more valuable — not less.

The organisations that thrive in the age of AI will not simply be those that automate the fastest, but those that design systems, cultures, and ways of working that keep humans meaningfully engaged, capable, and responsible alongside the technology they use.

The greatest risk of AI may not be replacement, but reduced human capability through convenience. Let’s not let that happen.